*Free registration is required to use the toolkits provided within HIPxChange. This information is required by our funders and is used to determine the impact of the materials posted on the website.

Background

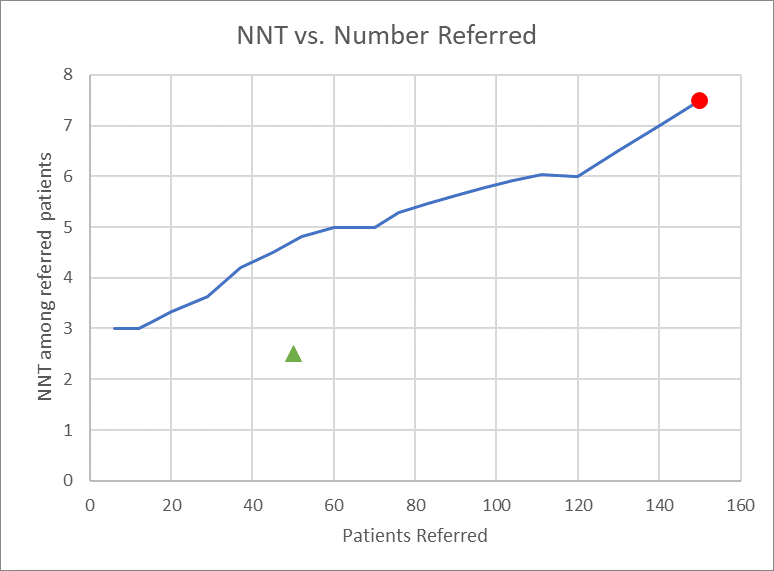

Recently, healthcare has seen a sharp rise in the implementation of machine learning derived algorithms for predicting risk across a broad range of clinical scenarios. Often, performance of these algorithms is evaluated by comparing the area under a receiver operating characteristic (ROC) curve. AUC as a single number may do a poor job of conveying an algorithm’s performance for a predictive task in a clinical context which may require a particular balance of sensitivity and specificity. Clinicians are generally interested in applying an algorithm to aid in risk-stratification for a particular scenario, such as ruling out a rare disease, confirming a particular diagnosis, or reducing population risk via an intervention. Often, estimates of the relative risk reduction of an intervention are available prior to implementation, and when combining these estimates with data from the ROC analysis, can be used to estimate the effectiveness of a given intervention at various thresholds. One such estimate of effectiveness is a curve showing projected number of patients referred during a given time period vs. the number needed to treat (NNT) among the referred patient population for all possible risk thresholds produced by a given algorithm based on its performance in test data.

ROC curves are created using classification statistics consisting of performance in terms of true positives (TP), false positives (FP), true negatives (TN) and false negatives (FN) at various threshold levels. This data can be used to extrapolate both patients identified and number needed to treat (NNT). The number of patients patients identified at a given threshold are calculated by taking the total percentage of TP and FP results (all patients flagged “positive”) by a model and multiplying by the total patient volume of interest (this could be a weekly or monthly volume, or a total patient population). NNT is estimated by incorporating an estimated relative risk reduction for an intervention. The absolute risk for a population of patients above a given threshold is calculated as the ratio of true positives to all positives for all patients at or above the risk threshold in a test dataset (TP/TP+FP). This absolute risk is multiplied by the relative risk reduction to estimate an absolute risk reduction, and the inverse of the absolute risk reduction generates the number needed to treat. These projected performance measures can be used to create plots that visually described the tradeoff between risk reduction and number of patients identified.

The above figure demonstrates an NNT vs. Number Referred plot. The red circle indicates the results of a “refer everyone” strategy, in which the threshold for a model is set so low that all patients are referred, and is equivalent to simply flagging all patients positive. The green triangle represents the theoretical ideal point, in which a perfectly functioning model identifies only those patients who are true positives, and no false positives. The Number Needed to Treat Thresholding Toolkit allows users to generate similar graphs, either from raw data of an algorithm’s performance in a given population, or applying an algorithm with known test characteristics at various thresholds to a theoretical population.

Who should use this toolkit?

This toolkit is intended for clinicians, policymakers, administrators or researchers interested in projecting the effectiveness of an intervention in a given population at various risk stratification thresholds. It allows calculations of NNT vs. Patients Identified plots from ROC curves and intervention effectiveness estimates.

What does the toolkit contain?

An Excel workbook that includes test datasets and graphs.

How should these tools be used?

The Excel workbook allows users to produce similar graphs from the information necessary to generate the ROC curve as well as data from the test set.

- “From Counts” tab: This allows direct calculation of true and false positive rates to generate an ROC curve, as well as rates of number of patients referred (all patients flagged positive) and NNT for the intervention in the test set. To generate these estimates, the user needs to fill out information in the highlighted fields, consisting of the test dataset size and prevalence of positive outcomes, estimated intervention relative risk reduction, and the counts of true and false positives flagged at each threshold level.

- “From Test Characteristics” tab: The calculations here are the same as the “From Counts” tab, but this sheet generates both estimated counts and the referral and NNT information in a population of known characteristics. Instead of empiric counts at each threshold, this book has the user input the test characteristics and then generates the counts in the population based on prevalence.

Development of this toolkit

The Number Needed to Treat Thresholding toolkit was developed by researchers and clinicians (Principal Investigator: Brian Patterson) at the University of Wisconsin-Madison School of Medicine & Public Health – BerbeeWalsh Department of Emergency Medicine.

This project was supported by grant K08HS024558 from the Agency for Healthcare Quality and Research. Additional support was provided by the University of Wisconsin School of Medicine and Public Health’s Health Innovation Program (HIP), the Wisconsin Partnership Program, and the Community-Academic Partnerships core of the University of Wisconsin Institute for Clinical and Translational Research (UW ICTR), grant 9 U54 TR000021 from the National Center for Advancing Translational Sciences (previously grant 1 UL1 RR025011 from the National Center for Research Resources). The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health or other funders.

Please send questions, comments and suggestions to HIPxChange@hip.wisc.edu.

References

Patterson BW, Engstrom CJ, Sah V, Smith MA, Mendonça EA, Pulia MS, Repplinger MD, Hamedani AG, Page D, Shah MN. Training and Interpreting Machine Learning Algorithms to Evaluate Fall Risk After Emergency Department Visits. Med Care. 2019 Jul;57(7):560-566.

Toolkit Citation

We suggest using the following citation for this toolkit:

Patterson, Brian W. Number Needed to Treat Thresholding Toolkit. University of Madison-Wisconsin BerbeeWalsh Department of Emergency Medicine and the UW Health Innovation Program. Madison, WI; 2019. Available at http://www.hipxchange.org/NNT.